Continuing our new Engineering blog series, perception engineer Kayla Comalli will illuminate Google Cloud Functions (GCFs).

A fairly recent addition to the Google Cloud toolbox, Google Cloud Functions (GCFs) provide rapid value through developer applications. It’s always exciting to experience the powerful applications that arise from new tools, and as such it seemed a cool opportunity to discuss the tech behind it. We’ll break down the components of GCF from five high-level angles and explore the resulting applications 6 River Systems (6RS) has made from it all.

Now let’s dig into the who, what, when, where, and why of 6RS’s choice to implement GCFs.

What are Google Cloud Functions?

Oftentimes, when one imagines a cloud computing architecture, there’s an association of some monolithic and über-complicated behemoth. From kubernetes clusters to big data storage to networking, it’s not always a matter of simply grokking these interconnectors. A comprehensive grasp of distant concepts and their jargon may feel like a labyrinthian, yet necessary step in building a bridge between your local application and cloud computing.

Yet despair not! There exists a direct route to networking your apps, as the crow flies, sans barrier.

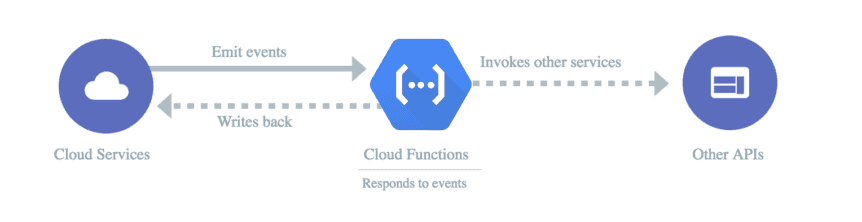

In 2017, gcloud released a beta feature that evangelized a remedy to the logistical pain points of server builds. These “functions as a service” (FaaS) compose code that performs single-purpose behaviors in response to events. Attach them to triggers like HTTP requests, Pub/Subs, or Cloud Storage, and a custom function that you build automatically executes. A function for data manipulation, intermediate logging, API bridging- anything! Node, Python, Java and Go are all eligible codebases for implementing behavior and it’s all wrapped up in either inline, zipped, or cloud sourced packages.

GCFs flourish to the scene in a fanfaronade of straightforward and rapid project-cloud communication. Rah Rah!

At 6RS, we use GCFs to build a fast and effective process to handle live data on our autonomous mobile robots, which we call Chucks. Because of the built-in trigger functionality, GCFs are a perfect candidate for real-time data streaming. As Chuck leads associates through their tasks, analytical details and telemetry stats are constantly streaming in. GCFs allow a way to transform and store that information as queryable data in a feature that is efficient in both computational cost and execution.

Why Cloud Functions?

The benefits of adapting newer tech vs residing services isn’t always obvious. Why not simply maintain the existing and functioning architectures? Well, alongside adoption of ever-evolving cloud-based options comes grand shifts in company efficiency and scalability. Cutting-edge releases address performance and feature constraints that accompany all legacy applications. Engineering teams can greatly benefit from regularly keeping lookouts on new answers to old questions. Enter: cloud functions.

GCFs offer a cheaper way to compute asynchronous tasks while making task parallelization significantly easier. Monolithic servers certainly have significant benefits, but for tasks such as managing multitudinous data streams, GCF provides a clearcut, atomized avenue. Within an isolated, event-processing environment separate from the full server pipeline, devs can surgically pin down the computational demand to precisely what is needed.

Since the beta 3 years ago, GCFs have continuously delivered, proving to be a robust time saving tool. GCFs operate in a serverless framework, which translates to zero overhead time. That savings in overhead has allowed more logistics features to trickle down to the consumer in a traceable way. Not only this, but the greater modularity that comes packed into these applications opens doors for rapidly testing out proof of concepts; a boon to innovative development.

With such a simplification of process, it’s important to address how security is managed. Security measures for cloud functions can be configured with identity-based or network-based access controls. Identity and access management configuration, environmental variables, ingress traffic options, etc. keep the system as air tight as server setup. Additionally, the atomization of the functions allows an even more granular approach to permission regulation.

Who is the target group?

Seamless integration is the name of the game. 6RS strives to deliver new tools that can bring the gift of continuous automation to the dev workflow. The cloud functions’ trigger-based operability reduces the trickiness of weaving applications to a single, high-level component.

With minimal deployment logistics, autonomy and data engineers can easily use GCF automation to analyze snapshots of events at any point in time on the robot. The payoff of such a feature is threefold:

- instantaneous visibility for error handling, either through alerts or dashboards

- recreating scenes for dynamic exploration of interrelated components

- persistent storage of historical events for direct improvement testing

Where does it apply?

At our customer sites, GCFs are working full time in the background to enable data management with zero downtime. This rapid framework of analytics enables our system to make informed and predictive adjustments. We are constantly developing ways that systems can use real-time AI tools for increased safety, navigation, and interfacing.

Internally, autonomy and analytics teams gain value from GCFs by making telemetry data more accessible, which provides insight on streaming measurements in such areas as calibration, charging, odometry, and mapping, etc. Timing is crucial for minimizing computational costs and with triggers at the end of the pipelines, these measurements are being stored and analyzed at an optimal point.

With an emphasis on ease of integration, further FaaS applications are a dark horse to rapid concept testing, opening the gates for data-based features in the pipeline. Data collection is crucial for training and testing machine learning applications, like computer vision, preventative analysis, and unexpected event handling.

When can we expect more?

6RS is perpetually exploring the next stages of deliverability; our engineering teams have been utilizing functions for heavy-hitting services since mid-2018. As mentioned, FaaS have been the silent powerhouse for much of our analytic processing and storage for over 2 years.

The massive ROI on these pursuits have been apparent, and further expansion is currently being productized as a result. Engineers are building ways to advance post-processing analytics for predictive debugging, machine learning tools and holistic system visibility. Stay tuned!

About the Author

Originally pivoting careers from biology to coding, she’s currently working on her comp sci degree at Tufts for fun. She’s been an engineer for 5+ years, 3 of which have been at 6RS.

In her ‘free’ time Kayla is doing classwork, programming VR games, reading, or running.